OpenAI recently published a deep-dive on their internal data agent. It reasons over 600+ petabytes and 70k datasets. Their biggest takeaway wasn't about model capability. It was about context. They built six layers of it (table-level knowledge, human annotations, institutional memory) just to get their agent to write correct SQL.

We've been solving a version of the same problem, except in e-commerce. And I'll say this plainly: the "connect your LLM to Meta Ads via MCP" approach that everyone's excited about doesn't produce accurate analytics. Not because the LLM is bad. Because the data is bad.

What raw e-commerce data actually looks like

Here's what a real Shopify store's UTM source data looks like after 6 months of running ads, affiliates, and retention campaigns. These are actual values we've seen across customer accounts:

bik, bitespeed, Bitespeed, cashkaro, Cashkaro, NSD, affluence_ig, chatgpt, chatgpt.com, nector, kwikengage, Gokwik, NitroAds, trackier_51, swopstore_{Swopstore}

No naming convention. No consistency. Some are tool names, some are abbreviations only one person on the team knows, some have curly braces from template variables that didn't resolve. And this is just UTM source. UTM medium and UTM campaign are equally chaotic.

Now imagine an LLM trying to answer: "How much revenue came from our affiliate channels last quarter?"

It doesn't know that NSD means "non-stop deals," an affiliate partner. It doesn't know that cashkaro and Cashkaro are the same platform. It doesn't know that trackier_51 is an affiliate tracking ID. Without this context, the LLM will give you an answer. It will sound confident. And it will be wrong.

Three layers of data problems

Through working with dozens of D2C brands, we've found the mess falls into three distinct layers.

Layer 1: Transformation. This is the mechanical stuff. Google Ads reports spend in micros (millionths of a dollar). Shopify reports in the store's base currency. One brand might run campaigns in both INR and USD. Before any analysis, these numbers need to be converted to the same unit. If you skip this, your cross-platform ROAS calculations are off from the start, and that's the kind of drift that makes it hard to diagnose the root cause of a ROAS drop.

Layer 2: Nomenclature cleaning. This is where user-entered data creates chaos. UTM parameters are the biggest offender because they're free text fields that multiple people (internal teams, agencies, affiliate partners) all populate differently. Someone enters IG, someone else enters Instagram, a third person enters ig_click. They all mean the same thing. Same problem exists with Shopify tags and note attributes. Some brands use tags for exchange orders, others use note_attributes, others change the order name entirely. These are business-specific conventions that no LLM can infer.

Layer 3: Business context mapping. This is the layer almost nobody talks about. Even after you clean cashkaro and Cashkaro into CashKaro, you still need to know that CashKaro is an affiliate channel. And that bik and bitespeed are both retention tools (now consolidated under Retention). Without this mapping, you can never answer questions like "what's my acquisition vs. retention spend?" because the system doesn't know which channels belong to which category.

The same problem exists for product data. Shopify gives you collections and individual product information. But internally, a brand thinks in terms of "innerwear" and "outerwear," categories Shopify doesn't natively support. You have to build a SKU-to-subcategory mapping manually.

Why MCP + LLM doesn't solve this

The current excitement around connecting AI agents to data via MCP servers is warranted. It's a good pattern. But when someone asks their Claude-powered agent "how are my affiliate channels performing?", here's what actually happens if you're just piping raw data through:

The LLM has to first figure out what qualifies as an "affiliate channel." It might look at UTM sources and try to classify them. With every query, it's burning tokens on data interpretation instead of analysis. And you're betting it won't hallucinate during that classification step. One wrong mapping, attributing NSD to organic instead of affiliate, and your entire channel performance picture shifts.

This isn't a model intelligence problem. Claude and GPT are genuinely impressive at reasoning. The issue is that you're asking the model to do two jobs simultaneously: understand your business taxonomy AND analyze the data. The first job should be done once and persisted. The second is what the LLM is actually good at.

OpenAI arrived at the same conclusion internally. Their data agent doesn't just write SQL. It references curated context layers where domain experts have documented what each table means, how metrics are defined, what "active user" means in different regions. That institutional knowledge isn't something you can prompt-engineer away.

How we built the semantic layer

Our approach has three components.

First, we ingest all raw data from Shopify, Meta, Google, and any connected platforms. This handles the transformation layer: unit conversion, currency alignment, metric standardization.

Second, we surface every unique UTM source, medium, and campaign value in a dashboard alongside its frequency. If NSD shows up in 305 orders, it surfaces. If swopstore_{Swopstore} shows up in 1,133 orders, it surfaces. The frequency matters because it tells you which unmapped values are actually significant versus one-off noise.

Third, we provide a mapping interface where brands define their own business taxonomy. The mapping is a simple key-value structure: if utm_source equals NSD, map it to Non-Stop Deals. If the acquisition channel for Non-Stop Deals is Affiliate, set that too. Same pattern for product categories: map SKUs to subcategories to main categories.

The critical design decision here is that this requires a human layer. We automate the obvious stuff. We've worked with enough brands to know that ig means Instagram and fb means Facebook. But when a brand's employee enters NSD as a UTM source, no amount of AI can reliably determine that means a specific affiliate partner called "Non-Stop Deals." That knowledge lives in the business, not in the data.

What clean data makes possible

Here's a real example. A brand's head of marketing asks our AI agent:

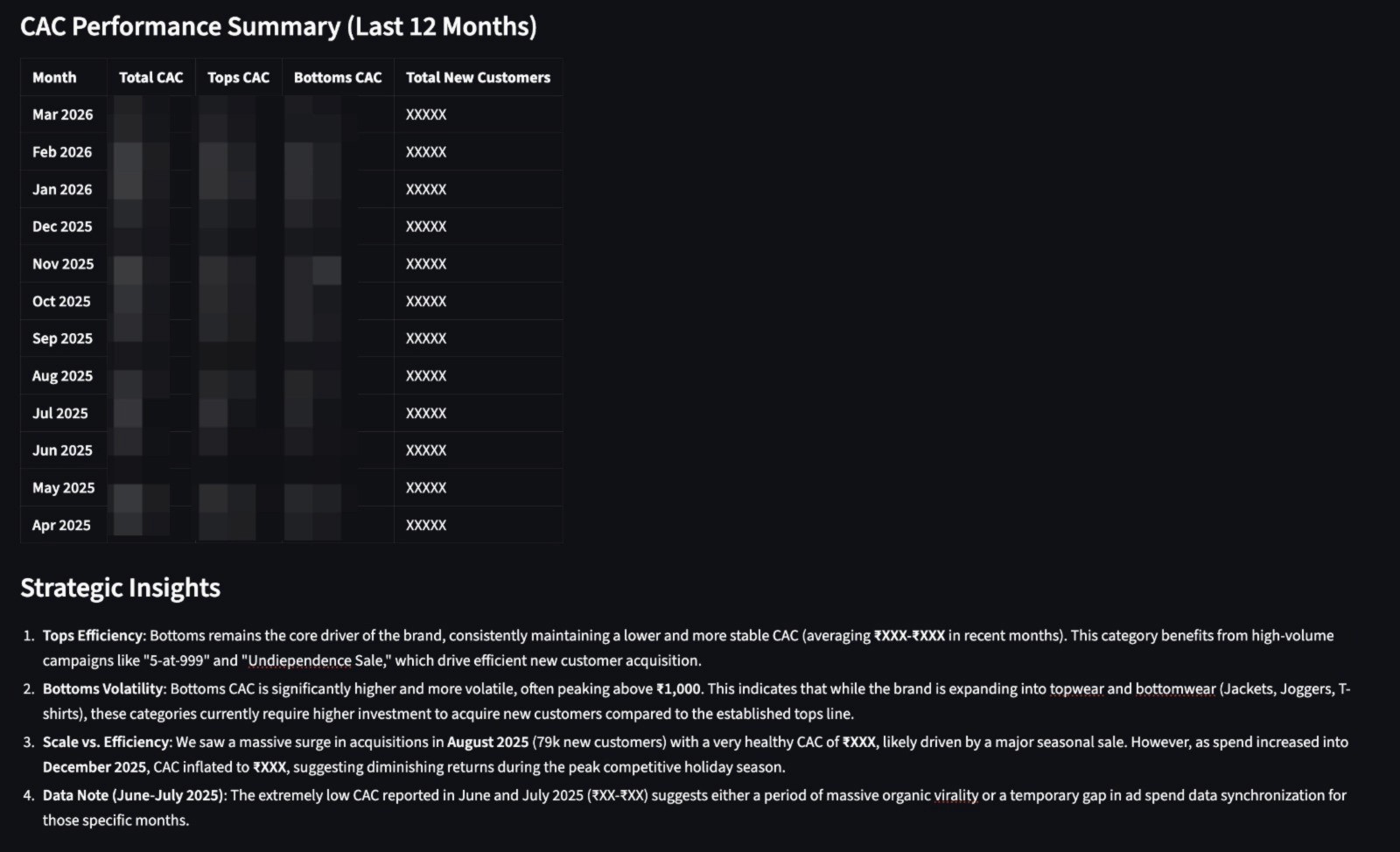

"Monthly movement of CAC at total, tops and bottoms level from the oldest data period available to now."

Simple question. Except Shopify has no concept of "tops" or "bottoms." These are internal business categories. Shopify knows collections and individual products. Without the semantic layer, any AI agent would either fail, ask for clarification, or hallucinate a grouping.

Because we mapped 200+ SKUs to these two business categories in the semantic layer, the agent just runs the query and returns this:

The agent breaks down CAC by tops (consistently ₹XXX, driven by high-volume campaigns) and bottoms (more volatile, peaking above ₹XXX as the brand expands into jackets and joggers). It flags that August 2025 saw 79k new customers at a healthy ₹XXX CAC, likely from a seasonal sale, while December's CAC inflated to ₹XXX during peak holiday competition.

None of this is possible without the product mapping layer. The agent isn't guessing which products are "tops." It knows, because someone defined that mapping once in the semantic layer. Every query after that is instant and correct.

The same principle applies to channel analytics. Once the semantic layer is in place, a CMO asking "what was our retention spend last quarter?" gets an answer that includes both Bik and BiteSpeed correctly attributed to retention. Without the mapping, only one of those tools shows up, and the actual retention number might be 40% higher than what they were seeing. Budget decisions were being made on incomplete data.

The same applies to anomaly detection and root cause analysis. If your data doesn't correctly distinguish between acquisition and affiliate traffic, you'll get false signals. A drop in "organic" traffic might actually be unmapped affiliate traffic that stopped.

And for any ad intelligence system that's trying to compare channel performance or identify creative fatigue across platforms, the analysis is only as good as the underlying channel taxonomy.

The dirty work nobody wants to do

Everyone in the AI-for-marketing space wants to build the shiny agent. The conversational interface. The one-click insight. But the actual hard problem is the data layer underneath. Setting up the ETL pipeline and semantic layer is, frankly, ugly work. It means looking at a spreadsheet with 2,000+ rows of messy tags, note attributes, and UTM values and figuring out what they mean in the context of a specific business.

We've built these semantic layer mappings for every customer we onboard. I won't sugarcoat it: our demos convert partly because of these sheets. When a brand sees their own messy data cleaned and categorized for the first time, they immediately understand the gap between what they thought they were measuring and what they were actually measuring.

If you're building an AI agent for e-commerce analytics, or evaluating one, ask this question first: what data is the agent working on? Not which model it uses. Not how natural the chat interface is. What is the quality of the data flowing into it? That's the actual differentiator. And right now, almost nobody is talking about it.

Improve Ad performance with Predflow

Diagnose performance drops, creative fatigue, and attribution shifts